Hyper-Converged Infrastructure

GlobeNewswire | October 19, 2023

Alluxio, the data platform company for all data-driven workloads, today introduced Alluxio Enterprise AI, a new high-performance data platform designed to meet the rising demands of Artificial Intelligence (AI) and machine learning (ML) workloads on an enterprise’s data infrastructure. Alluxio Enterprise AI brings together performance, data accessibility, scalability and cost-efficiency to enterprise AI and analytics infrastructure to fuel next-generation data-intensive applications like generative AI, computer vision, natural language processing, large language models and high-performance data analytics.

To stay competitive and achieve stronger business outcomes, enterprises are in a race to modernize their data and AI infrastructure. On this journey, they find that legacy data infrastructure cannot keep pace with next-generation data-intensive AI workloads. Challenges around low performance, data accessibility, GPU scarcity, complex data engineering, and underutilized resources frequently hinder enterprises' ability to extract value from their AI initiatives. According to Gartner®, “the value of operationalized AI lies in the ability to rapidly develop, deploy, adapt and maintain AI across different environments in the enterprise. Given the engineering complexity and the demand for faster time to market, it is critical to develop less rigid AI engineering pipelines or build AI models that can self-adapt in production.” “By 2026, enterprises that have adopted AI engineering practices to build and manage adaptive AI systems will outperform their peers in the operationalizing AI models by at least 25%.”

Alluxio empowers the world’s leading organizations with the most modern Data & AI platforms, and today we take another significant leap forward, said Haoyuan Li, Founder and CEO, Alluxio. Alluxio Enterprise AI provides customers with streamlined solutions for AI and more by enabling enterprises to accelerate AI workloads and maximize value from their data. The leaders of tomorrow will know how to harness transformative AI and become increasingly data-driven with the newest technology for building and maintaining AI infrastructure for performance, seamless access and ease of management.

With this announcement, Alluxio expands from a one-product portfolio to two product offerings - Alluxio Enterprise AI and Alluxio Enterprise Data - catering to the diverse needs of analytics and AI. Alluxio Enterprise AI is a new product that builds on the years of distributed systems experience accumulated from the previous Alluxio Enterprise Editions, combined with a new architecture that is optimized for AI/ML workloads. Alluxio Enterprise Data is the next-gen version of Alluxio Enterprise Edition, and will continue to be the ideal choice for businesses focused primarily on analytic workloads.

Accelerating End-to-End Machine Learning Pipeline

Alluxio Enterprise AI enables enterprise AI infrastructure to be performant, seamless, scalable and cost-effective on existing data lakes. Alluxio Enterprise AI helps data and AI leaders and practitioners achieve four key objectives in their AI initiatives: high-performance model training and deployment to yield quick business results; seamless data access for workloads across regions and clouds; infinite scale that has been battle-tested at internet giant’s scale; and maximized return on investments by working with existing tech stack instead of costly specialized storage. With Alluxio Enterprise AI, enterprises can expect up to 20x faster training speed compared to commodity storage, up to 10x accelerated model serving, over 90% GPU utilization, and up to 90% lower costs for AI infrastructure.

Alluxio Enterprise AI has a distributed system architecture with decentralized metadata to eliminate bottlenecks when accessing massive numbers of small files, typical of AI workloads. This provides unlimited scalability beyond legacy architectures, regardless of file size or quantity. The distributed cache is tailored to AI workload I/O patterns, unlike traditional analytics. Finally, it supports analytics and full machine learning pipelines - from ingestion to ETL, pre-processing, training and serving.

Alluxio Enterprise AI includes the following key features:

Epic Performance for Model Training and Model Serving - Alluxio Enterprise AI offers significant performance improvements to model training and serving on an enterprise’s existing data lakes. The enhanced set of APIs for model training can deliver up to 20x performance over commodity storage. For model serving, Alluxio provides extreme concurrency and up to 10x acceleration for serving models from offline training clusters for online inference.

Intelligent Distributed Caching Tailored to I/O Patterns of AI Workloads - Alluxio Enterprise AI’s distributed caching feature enables AI engines to read and write data through the high performance Alluxio cache instead of slow data lake storage. Alluxio’s intelligent caching strategies are tailored to the I/O patterns of AI engines – large file sequential access, large file random access, and massive small file access. This optimization delivers high throughput and low latency for data-hungry GPUs. Training clusters are continuously fed data from the high-performance distributed cache, achieving over 90% GPU utilization.

Seamless Data Access for AI Workloads Across On-prem and Cloud Environments - Alluxio Enterprise AI provides a single pane of glass for enterprises to manage AI workloads across diverse infrastructure environments easily. Providing a source of truth of data for the machine learning pipeline, the product fundamentally removes the bottleneck of data lake silos in large enterprises. Sharing data between different business units and geographical locations becomes seamless with a standard data access layer via the Alluxio Enterprise AI platform.

New Distributed System Architecture, Battle-tested At Scale - Alluxio Enterprise AI builds on a new innovative decentralized architecture, DORA (Decentralized Object Repository Architecture). This architecture sets the foundation to provide infinite scale for AI workloads. It allows an AI platform to handle up to 100 billion objects with commodity storage like Amazon S3. Leveraging Alluxio’s proven expertise in distributed systems, this new architecture has addressed the ever-increasing challenges of system scalability, metadata management, high availability, and performance.

“Performance, cost optimization and GPU utilization are critical for optimizing next-generation workloads as organizations seek to scale AI throughout their businesses,” said Mike Leone, Analyst, Enterprise Strategy Group. “Alluxio has a compelling offering that can truly help data and AI teams achieve higher performance, seamless data access, and ease of management for model training and model serving.”

“We've collaborated closely with Alluxio and consider their platform essential to our data infrastructure,” said Rob Collins, Analytics Cloud Engineering Director, Aunalytics. “Aunalytics is enthusiastic about Alluxio's new distributed system for Enterprise AI, recognizing its immense potential in the ever-evolving AI industry.”

“Our in-house-trained large language model powers our Q&A application and recommendation engines, greatly enhancing user experience and engagement,” said Mengyu Hu, Software Engineer in the data platform team, Zhihu. “In our AI infrastructure, Alluxio is at the core and center. Using Alluxio as the data access layer, we’ve significantly enhanced model training performance by 3x and deployment by 10x with GPU utilization doubled. We are excited about Alluxio’s Enterprise AI and its new DORA architecture supporting access to massive small files. This offering gives us confidence in supporting AI applications facing the upcoming artificial intelligence wave.”

Deploying Alluxio in Machine Learning Pipelines

According to Gartner, data accessibility and data volume/complexity is one the top three barriers to the implementation of AI techniques within an organization. Alluxio Enterprise AI can be added to the existing AI infrastructure consisting of AI compute engines and data lake storage. Sitting in the middle of compute and storage, Alluxio can work across model training and model serving in the machine learning pipeline to achieve optimal speed and cost. For example, using PyTorch as the engine for training and serving, and Amazon S3 as the existing data lake:

Model Training: When a user is training models, the PyTorch data loader loads datasets from a virtual local path /mnt/alluxio_fuse/training_datasets. Instead of loading directly from S3, the data loader will load from the Alluxio cache instead. During training, the cached datasets will be used in multiple epochs, so the entire training speed is no longer bottlenecked by retrieving from S3. In this way, Alluxio speeds up training by shortening data loading and eliminates GPU idle time, increasing GPU utilization. After the models are trained, PyTorch writes the model files to S3 through Alluxio.

Model Serving: The latest trained models need to be deployed to the inference cluster. Multiple TorchServe instances read the model files concurrently from S3. Alluxio caches these latest model files from S3 and serves them to inference clusters with low latency. As a result, downstream AI applications can start inferencing using the most up-to-date models as soon as they are available.

Platform Integration with Existing Systems

To integrate Alluxio with the existing platform, users can deploy an Alluxio cluster between compute engines and storage systems. On the compute engine side, Alluxio integrates seamlessly with popular machine learning frameworks like PyTorch, Apache Spark, TensorFlow and Ray. Enterprises can integrate Alluxio with these compute frameworks via REST API, POSIX API or S3 API.

On the storage side, Alluxio connects with all types of filesystems or object storage in any location, whether on-premises, in the cloud, or both. Supported storage systems include Amazon S3, Google GCS, Azure Blob Storage, MinIO, Ceph, HDFS, and more.

Alluxio works on both on-premise and cloud, either bare-metal or containerized environments. Supported cloud platforms include AWS, GCP and Azure Cloud.

Read More

Application Infrastructure

PR Newswire | January 09, 2024

Flip Electronics and Ampleon have joined forces to extend the supply of Ampleon's LDMOS portfolio of high-performance RF transistors to customers worldwide. Flip Electronics, the fastest growing authorized distributor of electronic components, provides supply chain solutions for extended manufacturing of legacy OEM-authorized electronic components. Ampleon is a global leader in RF power devices. The Ampleon LDMOS portfolio offers solutions for industrial, scientific, medical, broadcast, navigation and safety radio applications, along with applications for 4G LTE and 5G NR infrastructures.

"We chose to transfer Ampleon's LDMOS portfolio to Flip Electronics because they have been very successful in extending the lifecycle of semiconductors through inventory acquisition, wafer procurement and licensing IP from original manufacturers. Flip Electronics' ability to continue manufacturing our legacy parts was a paramount asset. We are very confident in Flip's commitment to ensuring our clients have long-term access to Ampleon legacy products," said Vincent Gerritsma, CEO of Ampleon.

"Flip is always looking for new ways to make a difference for our suppliers and customers. This strategic agreement is a perfect example. Ampleon is an outstanding brand within the RF power industry. Adding a premier RF supplier to our expanding portfolio of who's who in the semiconductor industry broadens our ability to support the long-term needs of our mutual customers. Uninterrupted manufacturing of Ampleon's portfolio will enable us to support customers for the next 20+ years. Our customers can also be assured that the products will be certified and guaranteed by Flip Electronics and 100% authorized by the original manufacturer, Ampleon," said Jason Murphy, CEO of Flip Electronics.

About Flip Electronics

Based in Alpharetta, Georgia, since 2015, Flip Electronics is an authorized electronic components distributor and extended life manufacturer that works closely with the world's leading original equipment manufacturers (OEMs) and contract manufacturers to create supply chain solutions for customers impacted by industry shortages and product obsolescence. Flip leverages its supplier relationships and supply chain expertise to help customers reliably, efficiently and cost-effectively source authorized components that extend their products' lifecycles.

About Ampleon

Created in 2015 and headquartered in the Netherlands, Ampleon is shaped by nearly 60 years of RF Power leadership. The company envisions to advance society through innovative RF solutions based on GaN and LDMOS technologies. Ampleon is dedicated to being the partner of choice by delivering high-quality, high-performance RF products with its world-class talent. The portfolio offers flexibility in scaling design and production for any volume and addresses applications for 4G LTE, 5G NR infrastructure, industrial, scientific, medical, broadcast, navigation and safety radio applications. Proven reliability, secure supply and excellent product consistency enable highest manufacturing yields for customers who benefit from Ampleon being a one-stop-partner for RF Power solutions.

Read More

Application Infrastructure

PR Newswire | January 12, 2024

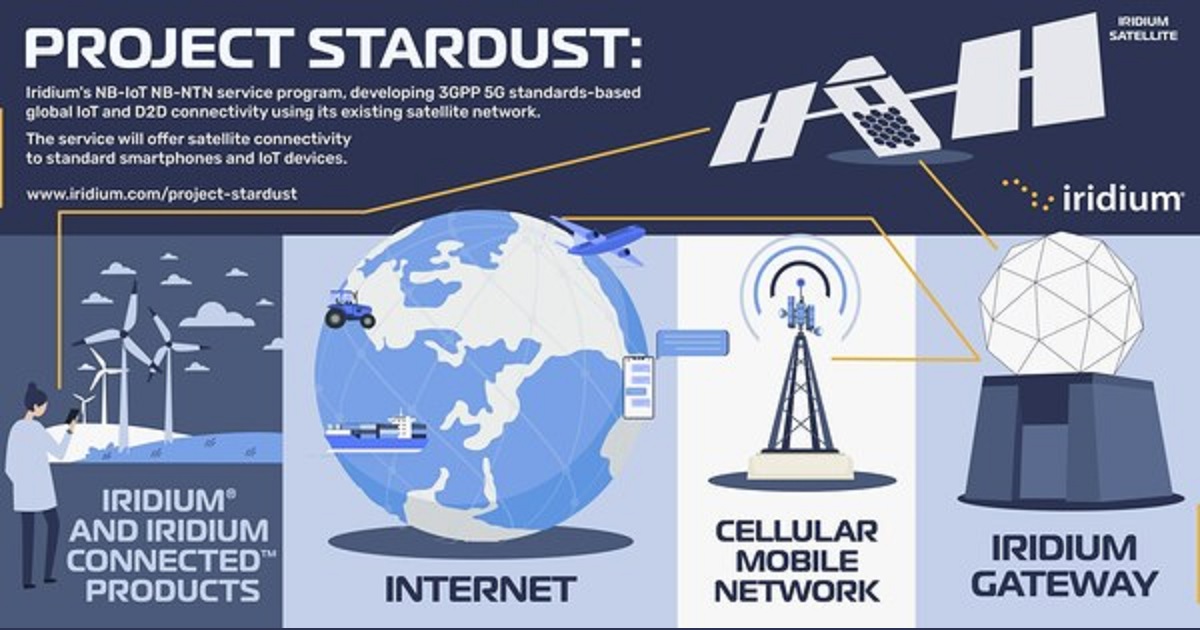

Iridium Communications Inc., a leading provider of global voice and data satellite communications, today announced Project Stardust, the evolution of its direct-to-device (D2D) strategy with 3GPP 5G standards-based Narrowband-Internet of Things (NB-IoT) Non-Terrestrial Network (NB-NTN) service development. As a new standards-based solution, it will be deployed on Iridium's existing satellite network giving the company a unique ability to offer both high-quality proprietary and standardized D2D and IoT services to its customers.

The early stages of programming Iridium's low-Earth orbiting (LEO) satellites offers a special opportunity to smartphone companies, OEMs, chipmakers, mobile network operators (MNO) and related IoT developers to have their requirements woven into the fabric of the Iridium® network. Iridium is already collaborating directly with several of these companies.

"This is an exciting moment for Iridium and is a testament to the flexibility and capability built into our satellite constellation," said Iridium CEO Matt Desch. "The industry is moving quickly towards a more standards-based approach, and after surveying the field, we found that we're the best positioned to lead the way using our own network, particularly given our true global coverage."

Iridium is designing its initial NB-IoT offering to support 5G NTN messaging and SOS capabilities for smartphones, tablets, cars, and related consumer applications. Adopting the service will enable device manufacturers to add a satellite connection to standardized devices, take advantage of existing, globally allocated and coordinated Iridium spectrum, and provide a superior low-latency LEO user experience. The Iridium network supports approximately 1,300 SOS and emergency (911 or equivalent) incidents per year, around the world and has readily available systems, processes, and partners to implement this capability for new devices.

Iridium understands the market need for its customers to develop and certify products quickly. Applying our established onboarding processes, chipmakers and NB-IoT developers can join Iridium's ecosystem of about 500 partners, and choose a proprietary, standards-based, or dual-solution integration approach for added network redundancy. MNOs will have the opportunity to be a one-stop shop for ubiquitous coverage and off-grid use cases, with unmatched industry reliability. Iridium partners are supported by a 24/7 customer support, back office, billing, and provisioning system, all ready to support the new service upon launch.

The Iridium satellite constellation's fully crosslinked, LEO architecture and global L-band spectrum provides a competitive service advantage versus other LEO and geostationary satellite networks. Certified to provide safety of life services by international regulatory bodies, the Iridium network has become the gold standard of reliability and continues to be the only network that provides connectivity everywhere on Earth. Operating in LEO, the Iridium constellation does not suffer from the same line-of-sight limitations, significant power requirements or outages that can affect entire regions from a single satellite as faced by geostationary systems.

The recognized leader in satellite IoT and personal communications, Iridium has more than two decades of experience and an unmatched partner ecosystem supporting more than 2.2 million users around the world. As of the third quarter of 2023, Iridium subscribers have grown at a 15% CAGR over the last five years, and the company serves approximately 1.7 million IoT customers today, including about 900,000 personal trackers and satellite messengers for consumer, enterprise, and government applications. Known for its reliability, coverage, and low power requirements, the Iridium network is an ideal fit for NB-IoT NTN service.

The company is currently working with several D2D and IoT-focused companies to understand and incorporate their use cases, requirements, and end-user needs into its planned service. The company anticipates testing to begin in 2025, with service in 2026.

About Iridium Communications Inc.

Iridium® is the only mobile voice and data satellite communications network that spans the entire globe. Iridium enables connections between people, organizations and assets to and from anywhere, in real time. Together with its ecosystem of partner companies, Iridium delivers an innovative and rich portfolio of reliable solutions for markets that require truly global communications. In 2019, the company completed a generational upgrade of its satellite network and launched its specialty broadband service, Iridium Certus®. Iridium Communications Inc. is headquartered in McLean, Va., U.S.A., and its common stock trades on the Nasdaq Global Select Market under the ticker symbol IRDM.

Read More